Built to Scale AI. Engineered for Builders.

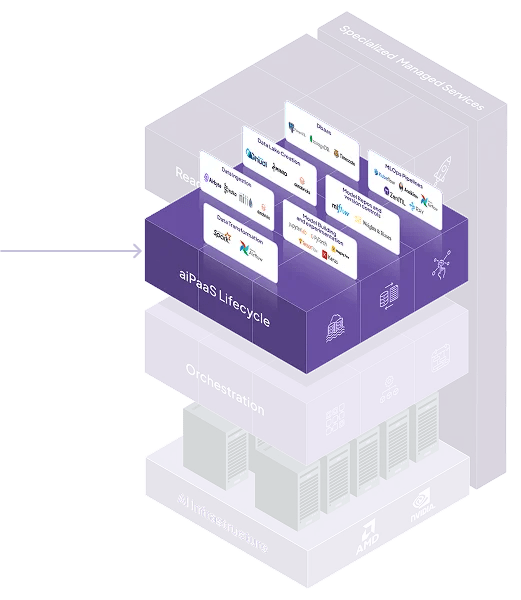

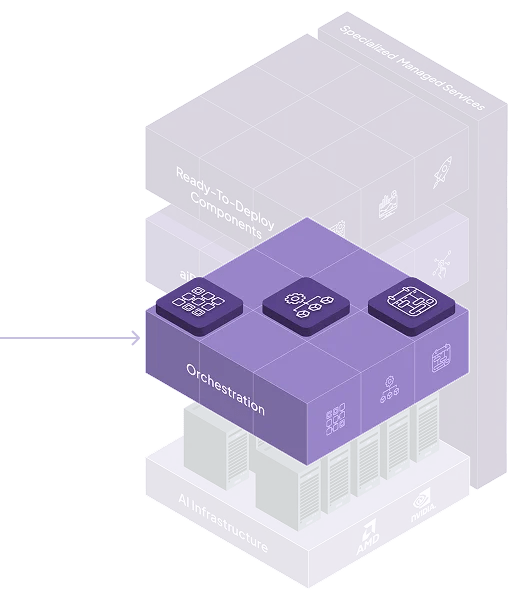

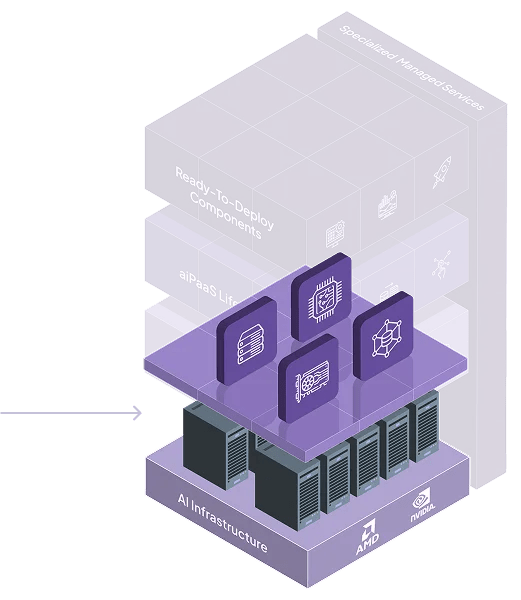

Modular, cloud-agnostic, and microservices-first — Neysa Velocis gives your teams speed, control, and freedom to innovate without infrastructure drag.

Simple by Design.

Powerful by Purpose.

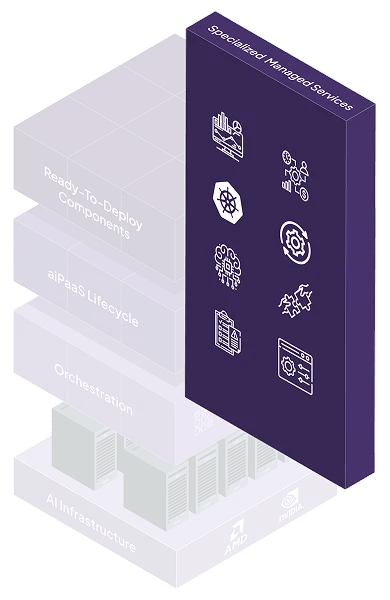

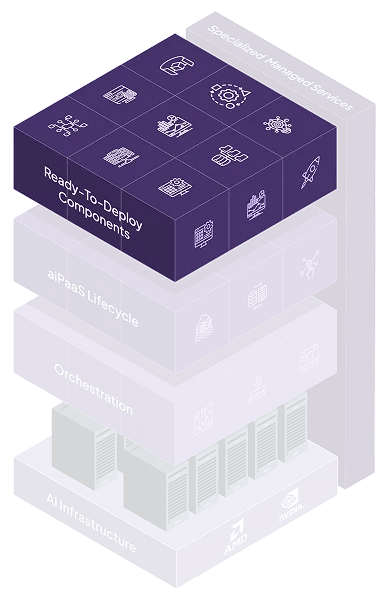

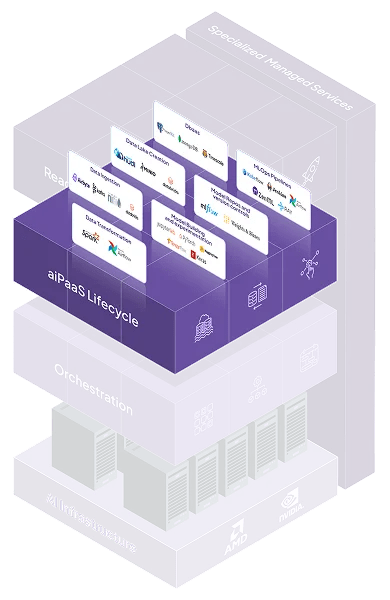

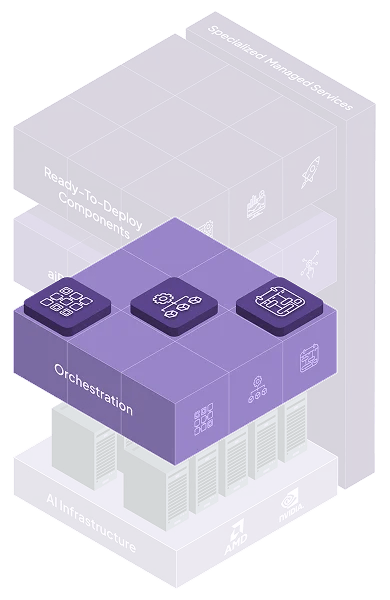

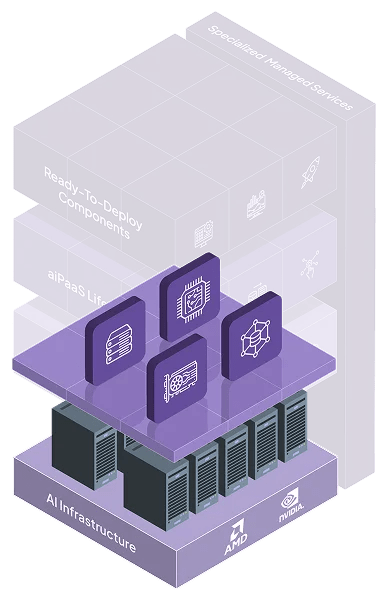

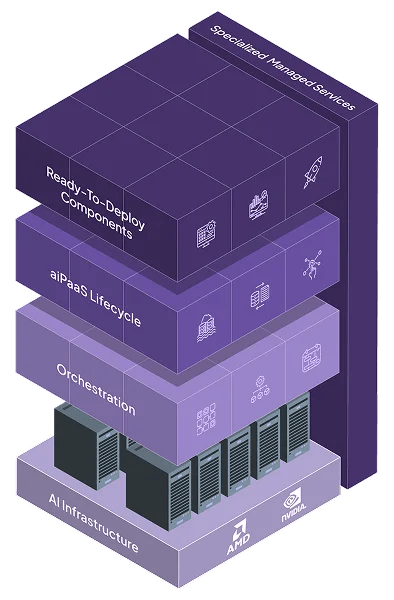

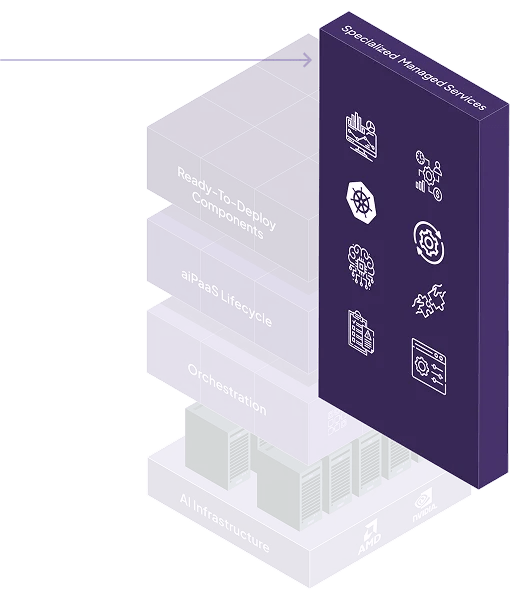

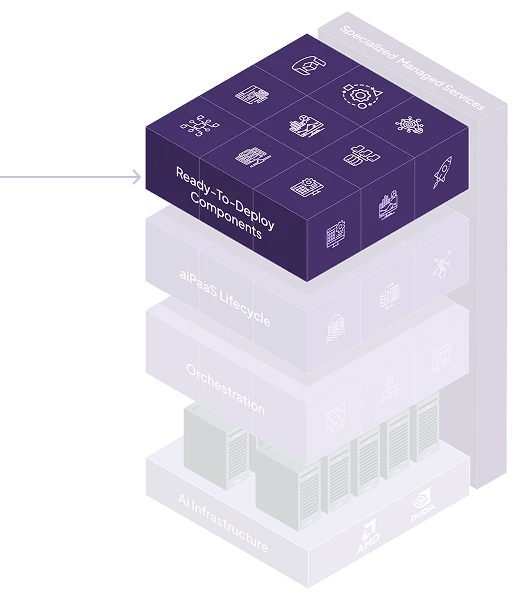

At the heart of Neysa Velocis is a flexible, distributed architecture built to abstract away infrastructure complexity — while providing complete control, visibility, and extensibility for technical users.

A System That Thinks Like You Do

Every layer of Neysa Velocis is modular, API-driven, and secure by design — giving developers the flexibility to move fast and stay in control.

Works with Your Stack. Integrates Like It Belongs.

Whether it’s Git, MLflow, or your IAM system — Velocis plugs in fast, plays well across clouds, and brings your workflows up to speed.

Identity & Access

SSO, SAML, LDAP, RBAC

Data & Storage

S3, Azure Blob, HDFS, NFS, Weka

Dev & MLOps

GitHub/GitLab, MLflow, Docker, Kubeflow

Security & Compliance

SIEM tools, policy enforcement engines

Cloud Connectivity

Public/Private cloud, hybrid deployments, VPC support

Want to See Velocis in Action? Let’s Dive In.

Speak with a solution architect, explore the API docs, or test-drive the platform with your own workloads.